PIGLeT: Language Grounding Through Neuro-Symbolic Interaction in a 3D World (ACL 2021)

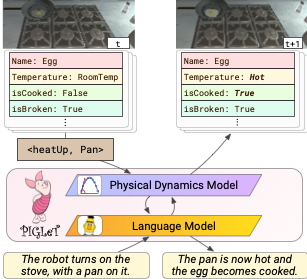

Paper » Code » Data »We propose PIGLeT: a model that learns physical commonsense knowledge through interaction, and then uses this knowledge to ground language. We factorize PIGLeT~into a physical dynamics model, and a separate language model. Our dynamics model learns not just what objects are but also what they do: glass cups break when thrown, plastic ones don't. We then use it as the interface to our language model, giving us a unified model of linguistic form and grounded meaning. PIGLeT~can read a sentence, simulate neurally what might happen next, and then communicate that result through a literal symbolic representation, or natural language.

Experimental results show that our model effectively learns world dynamics, along with how to communicate them. It is able to correctly forecast ``what happens next'' given an English sentence over 80% of the time, outperforming a 100x larger, text-to-text approach by over 10%. Likewise, its natural language summaries of physical interactions are also judged by humans as more accurate than LM alternatives. We present comprehensive analysis showing room for future work.

PIGLeT: Language Grounding Through Neuro-Symbolic Interaction in a 3D World

If the paper inspires you, please cite us:@inproceedings{zellers2021piglet,

title={PIGLeT: Language Grounding Through Neuro-Symbolic Interaction in a 3D World},

author={Zellers, Rowan and Holtzman, Ari and Peters, Matthew and Mottaghi, Roozbeh and Kembhavi, Aniruddha and Farhadi, Ali and Choi, Yejin},

booktitle ={Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics},

year={2021}

}

Training PIGLeT

PIGLeT has two parts: a physical dynamics model, that learns about "how the world works" by predicting state changes, and a language model that is capable of both discriminative tasks, and generation.

We train the physical dynamics model through interaction, over 280k environment state changes. It functions like an autoencoder. We use an Object Encoder and an Action Encoder to encode the object, and the action, into vectors. An Action Apply multilayer perceptron fuses them. An Object Dector then converts the vector representation into a symbolic representation, decoding every attribute.

We can then combine it with any pretrained language model, using as little as 500 paired examples. This enables transfer from grounded physical knowledge to language.

Results

PIGLeT can predict what happens next symbolically, given a language description -- or, it can generate a summary of any state change. It does well at both tasks. For predicting what happens next symbolically, it outperforms T5-11B and GPT3, which are trained only on text data from the internet -- and so they struggle to adapt to grounded situations in our finetuning setup.

Examples

Use the dropdown menu to visualize PIGLET's state change predictions. The model reads the "precondition" and "action" sentences, sees the symbolic "Initial States", and predicts the result over all attributes.

Action

Initial State

Result

Contact

Questions about physical grounding, or want to get in touch? Contact Rowan at rowanzellers.com/contact.